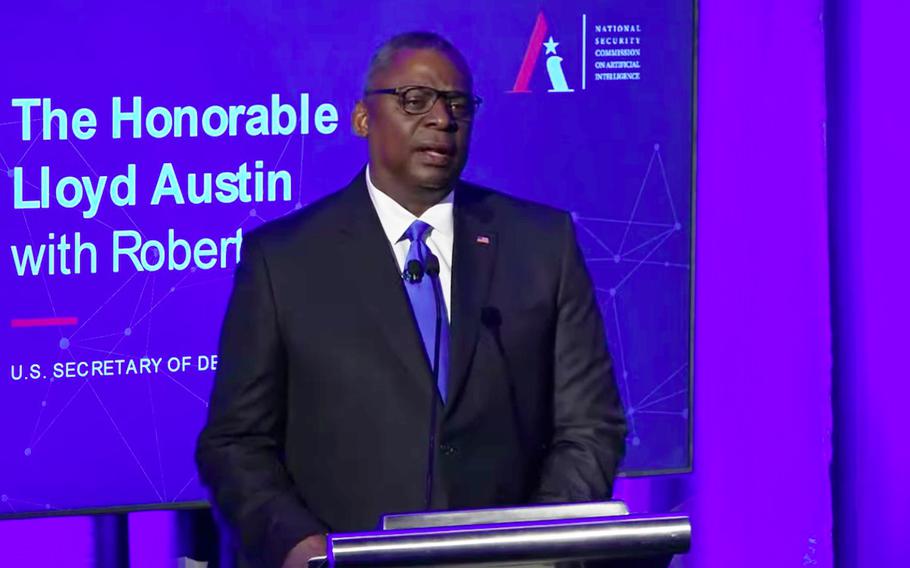

Defense Secretary Lloyd Austin addresses the National Security Commission on Artificial Intelligence at its summit on global emerging technology on July 13, 2021, in Washington, D.C. (Screen capture from video)

WASHINGTON — Artificial intelligence will be key to prevent future conflicts as China increases its efforts to develop the technology, Defense Secretary Lloyd Austin said Tuesday during a summit on emerging technologies.

Austin, who was speaking at a summit hosted by the National Security Commission on Artificial Intelligence, said the Defense Department must focus on incorporating AI into all aspects of warfare, especially as “China’s leaders have made clear they intend to be globally dominant in AI by the year 2030.”

“Beijing already talks about using AI for a range of missions, from surveillance to cyberattacks to autonomous weapons,” the defense secretary said.

The Pentagon’s proposed budget for 2022 asked for $112 billion in research, development, testing and evaluation — the department’s largest-ever request for such priorities.

In the request, “AI is one of the department’s top tech modernization priorities,” Austin said. “Over the next five years, the department will invest nearly $1.5 billion in the center’s efforts to accelerate our adoption of AI.”

He said the department is focusing on AI development because it will be key to deter adversaries in “the future fight.”

“Tech advances like AI are changing the face and the pace of warfare, but we believe that we can responsibly use AI as a force multiplier — one that helps us to make decisions faster and more rigorously, to integrate across all domains, and to replace old ways of doing business,” Austin said.

In Austin’s speech, he highlighted some of the 600 AI efforts already underway in the Defense Department, including the algorithm-driven Pathfinder project that helps synthesize information to support joint warfighting.

“The Pathfinder project … helps us better detect airborne threats by using AI to fuse data from military, commercial and government sensors in real time,” Austin said.

The Pentagon’s use of AI also expands beyond tactical warfare. Austin said the department recently used AI to help in combating the coronavirus pandemic with Project Salus, “a predictive tool for finding patterns in [virus] data that the department built from scratch with some top Silicon Valley companies starting last March.”

Still, Austin emphasized moving forward with AI ethically, pledging to “watch out for unintended consequences” and “immediately adjust, improve, or even disable any AI system that isn’t behaving the way that we intend.”

“Our development, deployment, and use of AI must always be responsible, equitable, traceable, reliable and governable,” Austin said. “AI is going to change many things about military operations, but nothing is going to change America’s commitment to the laws of war and the principles of our democracy.”